- AI Transformation Starts With the Change, Not the Technology

- Your Team Doesn’t Understand Their Jobs (And That’s About to Matter)

- The Empathy Gap in AI Transformation

- Getting Your Team to Think Strategically About AI

- Building an Environment of Possibilities – Where AI Innovation Actually Happens

- Who Owns Your AI Transformation?

The gap between knowing a job and understanding it has been invisible for decades. AI is about to make it the most important distinction on your team.

I was sitting in a conference room, watching a perfectly competent person explain why a new system was going to ruin everything.

“I look at the TPS Report every morning,” they said, and I could tell from their voice that this was the most important part of their day. “I check this column for anything negative, and if something’s off, I flag it and send it to operations. If you change the layout of this report, I can’t do my job. You’re going to have to make the new version look exactly like the old one.”

They weren’t being difficult, they were being honest. That report, in that layout, with that column in that position – that was their job. Not the analysis behind the numbers, not the decision-making that happened downstream, not the business outcome the report was designed to support – just the report itself. The ritual of scanning it every morning was so deeply wired into their routine that the format had become the function.

I’ve thought about that conversation a lot over the years, because they weren’t unusual. They were the norm. Most people in most organizations don’t fully understand what they do. That sounds harsh, and I don’t mean it to be. They didn’t build the process they’re following – they inherited it from someone who’s long gone, learned it from a coworker who learned it from someone else, and over time the why behind each step faded away until all that was left was the how. They know their jobs. They just don’t understand them. And for a very long time, that distinction didn’t matter much. Both the person who understood the process and the person who just knew the steps produced acceptable work. The business kept running. Nobody asked uncomfortable questions.

AI is changing that comfortable truth. Not gently, not gradually – it’s making the gap between knowing and understanding visible in ways that are impossible to ignore. And if you’re leading a team through any kind of AI transformation, this is the single most important thing to get right about your team before you get anywhere near the technology.

The Difference Between Knowing and Understanding

The distinction is simple, but it runs deep. Knowing your job means you can execute it. You follow the steps, you check the boxes, you produce the expected output. If everything stays the same, you’re fine. Understanding your job means you know why those steps exist, what they’re trying to accomplish, what happens downstream when you get it wrong, and what “good” actually looks like beyond just completing the task.

The TPS Report person knew their job. They could describe the exact sequence: open the report, scan this column, flag the negatives, send the flags to operations. Ask them what they did every morning and you’d get a precise answer. Ask them why – why that column, why negatives matter, what operations does with the flags, what business outcome depends on catching those exceptions – and you’d get a much less confident response. Not because they were lazy or careless, but because nobody ever asked them to think about it that way. The process worked, so why would they?

This pattern shows up everywhere, and it’s not limited to report-readers. Think about the person in accounts payable who knows which invoices to flag for review, but can’t explain the cash flow implications of getting it wrong. Or the production supervisor who knows the manufacturing sequence cold but couldn’t tell you why it’s in that order, or what would happen if you rearranged two steps. Or the sales operations analyst who builds the weekly forecast spreadsheet with extraordinary precision, but has never had a conversation about how the forecast drives inventory decisions three departments away.

These are all competent people doing competent work. The gap between knowing and understanding doesn’t make them bad at their jobs – it makes them brittle. As long as the inputs stay familiar and the process doesn’t change, they perform well. But the moment something shifts – a new system, a reorganization, a different reporting structure – they don’t have the foundation to adapt. They can tell you what broke, but not why it matters or how to fix it in a way that serves the bigger picture.

And here’s the part that makes this uncomfortable: the gap is largely invisible during normal operations. The person who knows their job and the person who understands it produce similar-looking work on any given Tuesday. The difference only shows up when things change. Which is exactly what’s about to happen.

Why AI Makes the Gap Visible

For most of the technology waves I’ve lived through – ERP, the internet, IoT, cloud – the knowing-vs-understanding gap mattered, but it revealed itself slowly. A new ERP system would roll out, and the people who only knew their jobs would struggle with the new screens and workflows for a few weeks. Or maybe a few months … and then they’d eventually learn the new how and settle back into routine. The underlying gap was still there, but the business could absorb it – because the pace of change gave everyone time to adjust.

AI doesn’t work that way. It compresses the timeline in a way that’s genuinely different from anything that came before, and it targets a very specific kind of work: the work that can be described as a set of steps, inputs, and pattern-matching rules. In other words, it targets knowing work.

Go back to the TPS Report. The job was: open report, scan column, identify negatives, flag exceptions, send to operations. That’s a description an AI tool can execute today – not theoretically, not in some future release, but right now. Feed it the data, tell it what “negative” means in context, and it will scan that column faster and more accurately than any person, every single morning, without coffee.

That’s the easy win, and every organization can see it. Automate the scanning, the flagging, the pattern-matching. You’ll save time, reduce errors, and free people up. It’s obvious, it’s valuable, and it’s where most companies stop.

But it’s the floor, not the ceiling. The real value – the kind that changes how a business operates – comes when someone who understands the work can teach AI the context behind it. Why that particular negative number is expected this month because of a seasonal pattern that nobody ever documented. How the exceptions in this report connect to inventory decisions three departments away. Which flags actually matter and which ones are noise that everyone learned to ignore years ago. That kind of knowledge has been locked inside people’s heads forever, and AI gives you a way to capture it, scale it, and act on it in ways that were never practical before.

This is where the knowing-vs-understanding gap stops being a philosophical distinction and starts showing up on the balance sheet. An organization that only knows its work will automate the surface – the scanning, the sorting, the obvious stuff. They’ll get modest returns and wonder what all the fuss was about. An organization that understands its work can teach AI to do things they never had the time or capacity to do themselves. They can build systems that don’t just flag exceptions but explain why they matter, predict what’s coming next, and recommend actions based on context that used to live in one person’s head.

The gap between those two outcomes is enormous, and it maps directly to the gap between knowing and understanding on your team. The people who understand their work aren’t just more adaptable – they’re the ones who can unlock the value that everyone’s promising AI will deliver. The people who only know the steps can tell AI what to do, but they can’t teach it why. And without the why, you’re automating the easy parts and leaving the real value on the table.

The Uncomfortable Truth About Leaders

It’s easy to read all of this and think about your team – the folks on the floor, the analysts, the people doing the daily work. But the knowing-vs-understanding gap doesn’t stop at the org chart’s midpoint. It runs all the way up.

I’ve sat in plenty of leadership meetings where the conversation about AI sounded remarkably like the TPS Report conversation, just dressed up in executive language. “We need to automate our reporting.” “Let’s use AI to improve our forecasting.” “Can we get a chatbot for customer service?” These are all knowing statements. They describe what the leader wants without demonstrating why it matters to the business or how it connects to anything else. Swap the word “AI” for “ERP” or “cloud” and you could be in a meeting from twenty years ago.

A leader who understands the work asks different questions. Not “can we automate this report?” but “what decisions does this report drive, and are we making those decisions well enough today?” Not “can AI improve our forecasting?” but “where is our forecast breaking down, and is the problem the math or the assumptions?” The questions reveal whether you actually understand the system you’re managing or whether you’re just managing the outputs.

This isn’t about intelligence or effort. I’ve known plenty of smart, hard-working leaders who knew their operations inside and out – every metric, every dashboard, every quarterly target – but couldn’t explain the connective tissue between those metrics and the business outcomes they were supposed to drive. They inherited their management processes the same way the TPS Report person inherited theirs: learn what to look at, learn what’s expected, produce the right outputs in the right meetings. The knowing worked fine because the pace of change was slow enough to adapt on the fly.

But if you’re about to lead an AI transformation and you don’t understand your own processes deeply enough to explain which parts require human judgment and which don’t, you’re going to make bad decisions about where to invest. You’ll automate the visible, obvious stuff and miss the deeper opportunities that only come from understanding how the pieces fit together. Worse, you won’t be able to evaluate whether AI is actually delivering value or just producing impressive-looking outputs that nobody trusts enough to act on.

And your team will notice. They always do. If you’re asking them to develop a deeper understanding of their work while you’re still operating at the knowing level yourself, the message lands as “do as I say, not as I do.” The credibility gap kills the initiative faster than any technology problem ever could.

You Can’t Just Ask

So you’ve got a gap to close – on your team and possibly in yourself. The obvious next move is to assess it. Figure out who understands their work and who just knows the steps, then build a plan to close the distance.

Please don’t do this.

Or rather, please don’t do it the obvious way. You absolutely cannot walk into a team meeting and say “I need to find out if you all really understand what you do.” It’s insulting, even if you don’t mean it to be. (Come on … not just a little?) And it won’t give you useful information anyway, because every person in that room will say yes. Not because they’re dishonest, but because admitting you don’t understand something you’ve been doing for five years feels like admitting failure. Human nature makes us avoid that, especially in public, and especially when the subtext is “we’re about to bring in AI and some of you might not be needed.”

The assessment has to happen sideways. You need to create situations where the gap reveals itself naturally, without anyone feeling tested.

One approach that’s worked for me is what I think of as the “teach it” test. Ask someone to explain their process to a new hire – real or hypothetical. Not the steps, but the reasoning. “Walk me through what you do, but focus on why each step matters and what would go wrong if we skipped it.” The people who understand will light up. They’ll tell you about the time something went sideways and what they learned. The people who only know will describe the steps in more detail, because that’s all they have. You’ll hear the difference immediately, and nobody had to admit ignorance.

Another is to ask “what if” questions in the normal course of work. “What would happen if this input changed by 20%?” “If we stopped producing this report tomorrow, who would notice first and why?” “What’s the dumbest thing a new person could do with this data?” These aren’t trick questions – they’re genuine curiosity about how well your team understands the system they operate in. And they’re a lot less threatening than “do you understand your job?”

You can also pay attention to how people react when something breaks. The person who only knows will tell you what’s different from normal. “This number is usually positive and today it’s negative.” The person who understands will tell you what it means. “This went negative because our largest customer delayed their order, and that’s going to ripple into next month’s production schedule.” Same observation, completely different depth.

The point isn’t to build a scorecard or sort people into categories. It’s to develop an honest picture of where your organization’s understanding lives – and where it doesn’t – so you can close the gap before AI makes it painfully obvious. Because once you’ve deployed an AI tool that does the knowing work better than your team, the people who can’t step up to understanding work are going to feel it. And by then, you’re managing a morale problem on top of a capability problem.

Closing the Gap Before AI Opens It

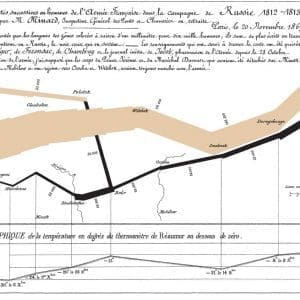

If you’ve been following this series, you’ll recognize that this is really an Operations and Data conversation wearing a people hat1“Howdy, howdy, howdy”. Understanding your job means understanding information flows – where data comes from, what transforms it, where it goes, who depends on it downstream. The people who understand their work are the ones who can trace those flows across systems and departments. The people who only know their work see their piece and nothing else.

So the practical work of closing the gap looks a lot like process archaeology. Walk through your key workflows – not just the steps, but the why behind each one. Find the places where the original reasoning has been lost, where “we’ve always done it this way” is the only explanation anyone can offer. Map the tribal knowledge that lives in people’s heads and hasn’t been documented because nobody ever needed to. That knowledge is exactly what makes the difference between automating the surface and teaching AI to deliver real value.

This isn’t a six-month initiative. You can start tomorrow, in the next conversation you have with someone on your team about how they do their work. Just shift the question from what to why, and listen carefully to what comes back.

But here’s the part I want to be honest about: this work is harder than it sounds, because it requires something most leaders underestimate. Asking people to examine their own understanding – to admit what they don’t know about the work they’ve been doing for years – takes trust. And building that trust requires genuine empathy for what it feels like to watch your comfortable, familiar world start to shift underneath you.

That’s where we’re going next. In the next article, we’ll talk about the empathy gap in AI transformation – and why the leaders who close it first are the ones whose teams actually make it through the other side.

If you’re working through how to lead AI into your business and want practical frameworks – not vendor hype – join our mailing list for the rest of this series and more.

24 February, 2026

- 1

Comments (0)