- The Data Value Chain: Seven Skills That Turn Data Into Decisions

- AI and the Data Value Chain: Where the Bottleneck Moved

- Unstructured Data and AI: The Knowledge You’ve Been Sitting On

- Seven Links in the Data Value Chain (Original)

AI compressed the technical middle of the Data Value Chain, shifting the bottleneck to the human bookends - Insight and Present. Most organizations haven't noticed.

I tried to write this article a couple of years ago, and looking back at it now, I can see exactly where I went wrong. I had the Data Value Chain framework – seven links, Insight through Present, a model I’d been refining for over a decade – and I wanted to show how generative AI was going to reshape it. Reasonable enough. So I walked through the chain link by link. Here’s how AI helps with Architect. Here’s how it helps with Generate. Here’s a neat example for Store. I found some links to articles about ChatGPT prompts for data extraction, added a few observations about code generation, and called it a day.

It read fine. It was organized, it was accurate, and it completely missed the point. I’d written a checklist when what I should have written was an argument. Because the interesting thing about AI and the Data Value Chain isn’t that AI can lend a hand at every step – of course it can, the same way a calculator helps with every kind of math. The interesting thing is that AI changed where the hard work lives in the chain. It compressed the middle and left the ends more exposed than ever. And that shift – where the bottleneck moved, not whether AI is helpful – is what actually matters for how you build your team and invest your money.

I’ve watched bottlenecks move before. Every major technology wave I’ve lived through has done this. The constraint shifts, the investment thesis changes, and the organizations that figure it out early get a few years of real competitive advantage before everyone else catches up. The ones that don’t figure it out keep pouring money into the place where the problem used to be, wondering why all this new technology isn’t making them smarter.

A Quick Refresher

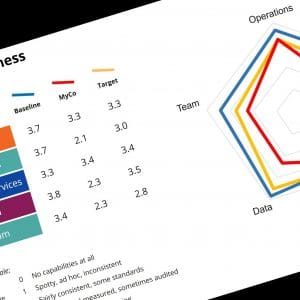

If you haven’t read the first article in this series, here’s the short version. The Data Value Chain is a framework I developed to describe the seven distinct skills required to turn raw data into business decisions. Each link in the chain requires a different kind of expertise, and – this is the part that matters – you almost never find all seven in one person.

The seven links, in order: Insight is the spark of imagination that figures out which questions are worth asking. Architect designs the infrastructure to handle the data. Generate pulls data from its sources. Store manages the physicality of keeping it. Process scrubs, matches, and normalizes it. Analyze looks for patterns and meaning. Present translates complex findings into something that changes how a decision-maker thinks.

I group these into the technical middle – Architect through Analyze – and the bookends: Insight at the front, Present at the back. The bookends are where data connects to business reality. The middle is where the technical heavy lifting happens. In the previous article, I made the case that the bookends are the hardest links to staff, because the skills they require (business imagination, strategic communication) don’t show up on a resume and can’t be screened with a technical assessment.

That distinction – technical middle versus human bookends – is where AI changes the story.

Where the Hard Work Used to Live

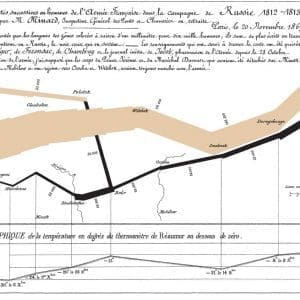

Every technology era has a bottleneck in the data chain, and it’s never the same one twice. The pattern is so consistent that once you see it, you can practically predict where the next shift will land.

When I started my career, the constraint was Generate and Store. Getting data out of mainframe systems was a genuine technical achievement – you needed specialized skills just to extract information from the places it lived, and storing anything beyond transactional records meant real engineering work. The people who could pull data from an AS/400 and put it somewhere useful were the scarce resource. Everything else in the chain waited on them.

Then ERP systems showed up and changed the equation. Suddenly you had integrated databases with standardized schemas, and the Generate and Store problems got a lot more manageable. But the bottleneck didn’t disappear – it moved. Now the hard work was Architect and Process. Connecting systems that were never designed to talk to each other, cleaning master data that had been entered differently in every division, building the plumbing that let information flow across the enterprise. I spent years of my career in this era, and I can tell you that the organizations who kept investing in better data extraction when the real problem was data architecture and process discipline were always a step behind. They had plenty of data. They just couldn’t make it consistent or trustworthy enough to act on.

The internet and cloud era shifted things again. Architecture patterns standardized, cloud platforms commoditized storage and processing, and the analytical tools got dramatically more accessible. The middle of the chain – the technical infrastructure – was still important, but it was no longer the thing that separated organizations that got value from data and organizations that didn’t.

Each time, the pattern was the same. The technology solved the current bottleneck, and the constraint moved to wherever the people skills hadn’t kept up. Organizations that recognized the shift early and redirected their investment – retraining, rehiring, reorganizing – got a few good years of competitive advantage. The ones that kept pouring money into the old bottleneck accumulated impressive technical capabilities that nobody could connect to business outcomes.

The Middle Got Fast

So where did the bottleneck move this time? To answer that, look at what AI actually does to the five technical links in the middle of the chain.

Start with Architect. Designing a data infrastructure used to mean weeks of whiteboarding, careful schema design, and expensive specialists who understood the tradeoffs between flexibility and performance. It still requires good judgment – but the execution speed has changed dramatically. AI code generation can scaffold a database schema in minutes. It can suggest architecture patterns based on your requirements, generate the boilerplate, and even flag potential scaling issues before you’ve written your first query. The architect’s job didn’t disappear, but the ratio of thinking to typing shifted heavily toward thinking.

Generate tells a similar story. Pulling data from sources – writing extraction routines, building API connections, handling the weird edge cases where a legacy system stores dates as six-digit integers – used to be painstaking, specialized work. AI handles the routine cases almost entirely now. Point a coding assistant at an API spec and you’ll have a working extraction pipeline before lunch. The edge cases still need a human, but there are fewer of them, and the human gets to start from a working draft instead of a blank screen.

Store and Process have been moving in this direction for a while, honestly. Cloud platforms already commoditized a lot of the storage engineering, and AI accelerated that further with automated optimization, intelligent tiering, and cost management that would have required a dedicated team a decade ago. Process – the data cleaning and normalization work that has always been the most tedious link in the chain – is where AI might be making the most dramatic difference. Matching records, detecting anomalies, standardizing formats, filling gaps in metadata. These tasks used to eat enormous amounts of skilled human time. They still need oversight, but the grunt work has collapsed.

Analyze is the most nuanced of the five, because it sits right next to the bookend. AI can find patterns in data faster than any human analyst. It can run thousands of correlations, surface anomalies, and generate hypotheses at a pace that would have been unthinkable even five years ago. But there’s a line – and it’s an important one – between pattern detection and business understanding. AI can tell you that sales dropped 15% in the Southeast region last quarter. It can even correlate that with weather patterns, competitor pricing, and supply chain disruptions. What it can’t do (not yet, and maybe not ever) is understand that the Southeast regional manager just reorganized her sales team, and that reorganization is the actual story the data is trying to tell. Analyze got faster, but the part of analysis that requires knowing the business stayed exactly where it was.

Add it all up and something structural has happened. The five links that used to represent the expensive, slow, specialized core of the data chain – the part where organizations spent most of their money and most of their recruiting energy – got dramatically faster and cheaper. Not free, not automatic, and not without skilled oversight. But the relative cost and difficulty of the technical middle, compared to where it was even five years ago, dropped through the floor.

And that changes where the constraint lives.

The Bookends Didn’t Move

Here’s the thing about compressing the middle of the chain: it doesn’t make the ends easier. It makes them harder. Or more precisely, it makes them matter more – which has the same practical effect.

Think about what happens when the technical middle gets fast. More data moves through the chain. More analyses get run. More dashboards get built. More reports land on more desks. The infrastructure is humming, the pipelines are flowing, and the organization is absolutely drowning in output that nobody asked for and nobody knows what to do with.

That’s the Insight problem, amplified. When it took six months to build a data pipeline and three weeks to run an analysis, the bottleneck was obviously in the middle, and whatever questions did make it through the chain got attention because there were so few of them. Now the middle runs in hours. You can ask a hundred questions a week. The constraint isn’t whether you can answer the question – it’s whether you’re asking one worth answering. Insight – that spark of business imagination that knows which questions lead to real value – suddenly becomes the scarcest resource in the chain. And unlike the technical middle, you can’t compress it with AI. You can brainstorm with a chatbot, sure. You can ask it to generate hypotheses. But the ability to look at a business problem and know which data would actually illuminate it – that comes from deep domain experience and pattern recognition that no model can replicate. It’s Pete’s complaint from a decade ago, except now it’s urgent instead of aspirational.

Present has the same problem from the other direction. When the chain produces more output faster, the last mile – translating findings into something that changes a decision-maker’s mind – gets exponentially more important. And exponentially more crowded. Your executives aren’t seeing one analysis a month now. They’re seeing ten a week, and most of them are competent, data-rich, and completely forgettable. The person who can take a complex finding and lead with the one insight that matters – who can read a room, understand what the audience actually needs to hear, and communicate it in a way that drives action – that person is more valuable than ever. AI can generate charts. It can draft narrative summaries. It can even suggest which visualization type best fits the data. But it can’t tell you that your CFO doesn’t trust any analysis that doesn’t start with the financial impact, or that the operations VP will tune out if you open with methodology instead of results. That’s communication as a strategic act, and it’s stubbornly, irreducibly human.

The bookends didn’t just stay the same. They became the constraint. And most organizations haven’t realized it yet, because they’re still measuring success by how much data flows through the middle.

The Mismatch

Look at where most organizations are spending their AI and data budgets right now. New platforms. Better pipelines. Automated data quality tools. Faster analytics engines. Modern data stacks. These are all investments in the technical middle of the chain – the five links that AI already compressed.

I’m not saying those investments are wasted. The middle still needs to work. You can’t skip it. But if you’re pouring 80% of your budget into making the fast part faster while the bookends are understaffed and undertrained, you’ve got a mismatch between where you’re investing and where your constraint actually lives. It’s the equivalent of a factory that keeps buying faster machines for a production line that’s bottlenecked at quality inspection. The machines aren’t the problem. The inspection station is.

The hiring patterns tell the same story. Post a job for a data engineer and you’ll get two hundred resumes. Post a job for someone who combines deep business understanding with the ability to formulate data-driven questions that nobody else is asking – someone who can do Insight at a high level – and you’ll get silence. Not because those people don’t exist, but because the job description doesn’t exist. Most organizations don’t even have a role that maps to the Insight link. They assume it happens organically, or they expect the data team to figure out what questions to ask on their own, or – worst of all – they put the entire burden on the executive suite and wonder why the data team keeps producing analyses that nobody requested and nobody acts on.

Present has the same structural problem. Organizations hire data visualization specialists and expect them to handle the last mile. But presentation in the Data Value Chain sense isn’t about making pretty charts. It’s about strategic communication – knowing what your audience needs to hear, leading with the finding that will actually change behavior, and having the organizational credibility to make it stick. That’s not a junior design role. That’s a senior skill that combines analytical depth with communication talent and business judgment. And it’s almost never on the org chart.

The fix isn’t complicated in concept. It’s just uncomfortable, because it means redirecting investment away from technology (which is concrete and budgetable) toward people and skills (which are ambiguous and harder to measure). It means hiring for curiosity and business imagination at the Insight end. It means developing strategic communication skills at the Present end. It means restructuring data teams so the bookends get as much attention as the middle. And it means accepting that the shiny new AI platform you just bought isn’t going to solve the problem if nobody on your team can ask a question worth answering or communicate a finding worth acting on.1This is where the Data Value Chain connects to Team Dynamics in the Building Blocks framework. The AI investment strategy is really a team development strategy.

The Bottleneck Moved

The Data Value Chain still has seven links. AI didn’t eliminate any of them – I was right about that much in my first attempt at this article. But it fundamentally changed the economics of the chain by compressing the technical middle and amplifying the importance of the human bookends. The bottleneck moved from “can we build the infrastructure?” to “can we ask the right questions and communicate what we find?”

If that sounds familiar, it should. It’s the same shift that has happened with every major technology wave I’ve lived through. The technology solves the technical constraint, and the human constraint becomes the new frontier. What makes this round different is the speed and completeness of the compression. AI didn’t just make the middle a little faster. It made it fast enough that the middle is no longer the primary reason data initiatives fail.

The organizations that figure this out – that redirect their investment toward Insight and Present, that hire for curiosity and communication alongside technical skill, that restructure their teams around where the bottleneck actually lives today – those organizations are going to get disproportionate value from every dollar they spend on AI and data. The ones that don’t will keep building faster pipelines to nowhere.

And there’s another shift coming that makes this even more interesting. Everything I’ve described so far – the seven links, the compression, the bottleneck – assumes we’re talking about structured data. Rows and columns, metrics and KPIs, the kind of data that lives in databases and spreadsheets. But the overwhelming majority of what organizations know isn’t structured at all. It lives in emails, conversations, documents, and the heads of people who’ve been around long enough to know where the bodies are buried. AI is cracking that open too, and it changes the game in ways the Data Value Chain was never designed to handle. But that’s the next conversation.

Want to stay ahead of the AI data strategy curve? Join our community of executives and practitioners navigating Data Mastery and the other Building Blocks of a connected business. Subscribe to the Maker Turtle mailing list for frameworks, case studies, and practical guidance you won’t find in a vendor pitch.

Related Articles

- Five Trends in AI and Data Science for 2026 – Davenport and Bean on the shift from individual AI productivity gains to enterprise-level value realization

- The State of Data & AI Literacy in 2026 – DataCamp/YouGov survey of 500+ enterprise leaders showing 60% report data skills gaps despite widespread AI investment

- AI and Data Strategy in 2026: What Data Leaders Must Get Right – Seven practice leads on why foundations, governance, and adoption matter more than capability

Recommended Books

- The Goal by Eliyahu Goldratt – The classic on identifying and managing system constraints; the bottleneck argument in this article owes a debt to Goldratt’s thinking

- Storytelling with Data by Cole Nussbaumer Knaflic – A practical guide to the Present end of the Data Value Chain; data communication as a strategic skill

- Competing on Analytics by Thomas Davenport – Building organizations that treat data-driven decision-making as a competitive advantage, not just a technology initiative

3 April, 2026

- 1This is where the Data Value Chain connects to Team Dynamics in the Building Blocks framework. The AI investment strategy is really a team development strategy.

Comments (0)