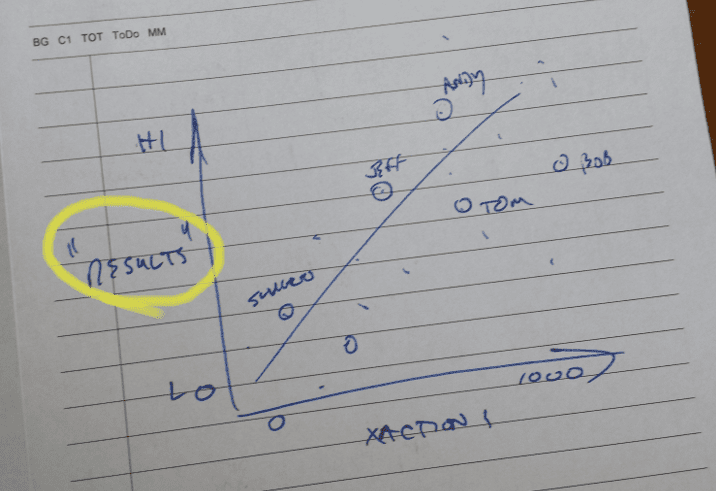

For our back-of-the-napkin experiment in tracking the useful value produced by enterprise systems – our next step will move away from the technical tools for a bit, and take a look at results. Specifically, we want to understand who is “delivering the goods” – and some sense of their success relative to others.

At first, the question sounds simple – how do you measure success? Metrics are a common element of any business activity, and people are often paid, and bonuses earned, by meeting and surpassing goals and objectives that are SMART. But there will be challenges – not all teams will have the easily accessible, regularly tracked, and individually unique measurements that we need. Also – how do you compare different groups, with different metrics? For example, sales teams might use Revenue, New Orders, Profitability, Customer Satisfaction, or any number of valid metrics – how can you genericize across the groups to get reasonably valid comparisons?

Selecting the Right Metrics

A good way to get people to see the relevance of a question like Does system utilization drive performance? is to use Success Metrics that are the most important for them. What metrics do they use to check and adjust behavior, and drive to desired results? For sales folks using a CRM system, it might be Revenue Growth, Deal Quality, Profitable Sales, or something similar. For an Operations group, it might be On-Time Delivery, Overtime Cost Control, Inventory Levels, or any number of Cost of Poor Quality metrics.

For our needs, Success Metrics need to be …

- Well Established – Measurements have already been defined – no additional explanation, training, or development is required

- Has their Attention – Something that they look at every day, or at least once a month. If it drives their performance review, it’s a great candidate

- Identifiable to an Individual or Small Group – Some important metrics aren’t individualized – especially in the Operations area (such as On Time Delivery or Inventory Levels). However, if you can attribute a metric to a specific business unit, product line, work cell, or value stream, you may be able to aggregate system use by that same grouping of people.

Comparing Apples to Apples

To address the challenge of comparing multiple groups with different Success Metrics, we’ll need to “genericize” the measurements by converting to percentiles. For every metric, define what 100% successful looks like, and what 0% looks like – and then compute where each individual hits along that curve. This spreadsheet snippet shows the math in action, for my sample SellCo Sales Team; I am measuring them by Average Deal Margin, where 50% margin on a sale would be a home run success. Note that I don’t think 10% margin on a deal is acceptable performance – but my minimum margin on any sale would be 10%, so that’s my floor.

(Note that Google Spreadsheets and MS Excel have the same syntax for the PERCENTRANK() function, so no need to choose sides here.)

A more likely scenario – your team has multiple metrics that they are rated on. At SellCo, we also value Total Revenue and (of course) the team’s Customer Satisfaction rating. In the case of multiple metrics, we compute an overall Success Metric by averaging the three scores; we see in this case that Savino jumps into second place due to better performance in Total Revenue and Customer Satisfaction.

On the other hand, the MarketCo business unit has a different focus; instead of Total Revenue, they are focused on their top tier product lines, and are working to drive sales for these items. The blending math works the same way …

And, given this blended measurement of Success, we can make a reasonable comparison of the two teams; in their respective environments, we see that Neisler, Savino, and Varga are “top performers”, and Lum & Hoehne need a lot of work.

At this point, our blended rating may have some passing interest as the two sales orgs compare best practices, and talk about the relative position of the top performers. In the next post in this series, we will look specifically at their use of a CRM system, and see if there is a correlation.

Previously …

- Who is Using the System? Tracking in the Enterprise

- Tracking in the Enterprise – Logging Utilization Simply and Consistently

Next up …

- Tracking in the Enterprise – Correlation versus Causation

Comments (0)