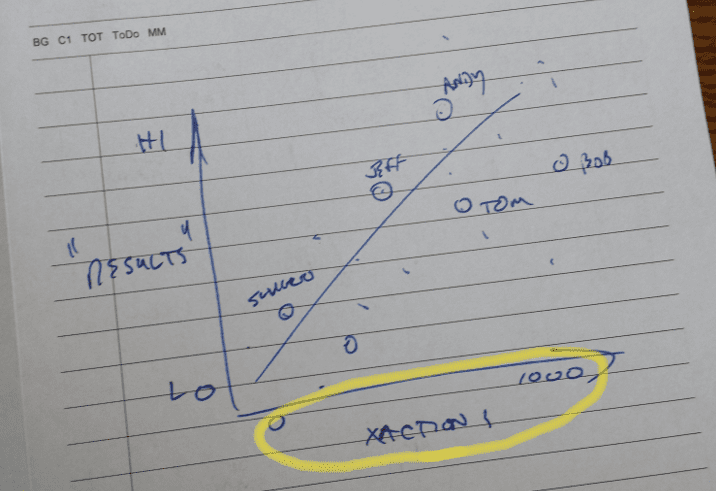

We’re trying use metrics to visualize the correlation between system use and business results – any system, regardless of the platform / technology – and we’d like to draw the same picture of input vs. results for any system. I’m stealing some inspiration from Tufte here – let’s keep the story simple, clean, and consistent, so my audience can focus on the question (WIIFM). And it’s a meant to be a universal question – doesn’t matter what system we are talking about, we’d like to understand if winning or losing is driven by using this fancy technology we just paid for.

The ideal picture is relatively simple – for a list of people (or teams), plot how much they are using the system versus the measurement of success. In my next post, we’ll talk about “measuring success” – for now, let’s just dive into the arcane world of transaction logs (sorry if this gets a little techie, but it’s a key piece of the puzzle).

It’s Big, It’s Heavy, It’s Wood

One of our key assumptions is that every technology / application / platform keeps a event log of some sort. The formats can be wildly different, and the amount of detail can vary – but a basic audit trail, change log, status record of some sort is our baseline requirement. Of course, I want a consistent correlation picture, so I will translate them all into an aggregated log, with only enough detail to support the right visualizations. Not too worried about losing detail here – we aren’t looking to replace log reporting functionality for all enterprise apps. However, I want to anticipate what kind of information will help visualize what is / is not happening.

A Generic Log (glog?) specification might look something like this; please note that I do not know of any ANSI-standard generic event-log-vocabulary publication, so we’ll use multiple technical platforms for examples. With that said, I should be able to pull the following basic information from any event log:

- Object Type (aka Transaction Type) – I’d expect to see a single record in my Event Log for each type of object I have “touched” in some way. Web servers would give me Sites and Pages. File systems and Collaboration environment should tell me about individual documents in Document Libraries. SharePoint should spit out details on the Items in all of those Lists (Calendars, Blogs, Comments, Discussion Forums, News Feed Items, News Feed Comments, etc.). I’m looking for T-codes from SAP, and list of Reports from my report writing system.

- Events – as many as we might need, such as (but not limited to) … Created, Modified, Read / Viewed. Depending on the system, it might be tough to get every kind of event – in those cases, consider the 80/20 Prioritization rule (on the first pass, can we spend 20% of the time to get 80% of the value?)

- Event Attributes – Would like to get a record of

- System: Name / instance of the system – ex. “JDE Ver 9.0 Production”

- Who: Who originated / created the object. This should be captured as a User ID

- Object Type: What are we looking at here?

- Object Name: Develop some kind of convention here, and keep it flexible; ex. if a document / file, name of the file. If a blog post, title of the post. If Announcement – just the word “Announcement” would be fine

- Event Description: Longer text; develop some kind of convention, be flexible. Ex – If a document / file, blank. If a blog post – first 100 characters of the post. If Announcement – first 100 characters of the post

- When: Date that the Event happened

A sample data set might look something like this …

[sourcecode language=”plain”]"MDM v13.05","ehavalanch","Application","MDM Edit Page","Open","MDM form has been opened (Edit People / Resources)","2014-06-17 03:20:12 PM""MDM v13.05","jweinhart","Application","MDM Edit Page","Open","MDM form has been opened (Edit People / Resources)","2014-06-19 10:45:22 AM"

"MiniCharter v14.03","jkirk","Word","Mini Charter Template","Open","Mini-Charter / Print worksheet has been opened","2014-06-10 03:00:16 PM"

"Mysearch v14.04","gsalisbury","Search Page","MySearch Main","Execute Search","Smart system","2014-05-16 02:24:32 PM"

"Mysearch v14.04","kmooney","Search Page","MySearch Main","Execute Search","15 15 15","2014-05-19 05:58:12 AM"

"Mysearch v14.04","tfranklin","Search Page","MySearch Main","Execute Search","CAD CAM","2014-05-16 04:29:32 PM"

"Mysearch v14.04","bfeller","Search Page","MySearch Main","Execute Search","habanero","2014-05-16 03:07:45 PM"

"ProjectData v14.05","ehavalanch","Excel","Project Data Sheet","Open","Project Data worksheet has been opened","2014-06-22 09:36:42 AM"

"ProjectList v13.07","gtess","web app","Open Projects Page","Open","Contract/Project List Maintenance app has been opened","2014-06-22 09:08:31 AM"

"ProjectList v13.07","gtess","web app","Project Summary","Edit","Project Maintenance page has been opened","2014-06-22 09:01:21 AM"

"ProjectList v13.07","gtess","web app","Project Comments","Edit","Project Comments page has been opened","2014-06-22 08:44:12 AM"

[/sourcecode]

Destination and Automation

My ultimate goal is to create consistent visualizations for any system – so I will want these glog files to be identical. We need to keep the technical spec simple – so any reasonably capable system could generate them. The data should be stored in a simply formatted (.CSV, for example) text file – here’s a nicely detailed set of specifications that covers, among other things, the ever-popular quoted strings issue. Also, be sure to pick and document a standard, simple format for Dates; for example, I prefer that they are expressed as text strings, in a format like YYYY-MM-DD HH:MM:SS AM/PM

<aside>Is there a ANSI / RFC / SQL / techie standard name for this format? </aside>

You’ll also want to create an automated method for extracting utilization data from the various systems in your environment; this should be a “workmanlike”, technical solution – no fancy user interface required. Our ultimate use will probably be some kind of command line utility / console app that pulls the data on a scheduled or ad-hoc basis. The program will probably be specific to each system we are comparing, but the “glog generator” should be driven by command line parameters or an external parameter file, read through the native logs or transaction files for the system, and generate our simply formatted text file, one record per transaction. Since we eventually want this to run on a scheduled, regular basis, should be able to specify the following as command line arguments:

- Source (for multi-instance systems)

- Destination (folder / file name for the output file)

- From – To Dates (you won’t want to grab all of history each night; just the last few days, as a fault-tolerance hedge against unanticipated down time)

- User ID / Password (system account with the proper security)

- Config File name (an alternative way to provide parameters)

- Logging, verbosity, error codes, parm help, and other cool things that any self-respecting command line program should have

Keep this piece light and flexible; since you will eventually want to pull data from all sorts of systems, running on a variety of platforms, and potentially generating tons of transaction details – it’s wise to keep these log processing tools as simple as possible.

Previously …

Next up …

This Post Has 2 Comments

I’m liking where this is going. Any follow ups how you use the data?

Yes! I have updated this post with links at the end, to the next two posts in the series.